"The sovereign is he who decides the exception." – Carl Schmitt

In recent essays, I’ve been raising the alarm over a paradox: AI – rather than liberating us from drudgery – is making ever-greater demands on our time, and leaving us feeling hollowed out.

Now I want to outline a more optimistic scenario for how the same tools might make us richer and more productive, freeing up our time and energy for the things that make us human.

The best case is a future where we let the computers do the “computer stuff” so that people can do the “people stuff.”

But to get there, we have to move past the anxiety around being replaced by AI. And this starts with asking three fundamental questions:

What is a job?

What can we fruitfully delegate to AI and machines?

And finally (and most importantly):

What is the ultimate vocation of man?

We live in a period of unprecedented specialization and division of labor. This means we can choose from a much wider range of occupations than our ancestors (who often had no choice at all).

And yet at the same time, certain voices in the AI discourse give us the impression that the range of occupations is narrowing – to the point where soon there might be nothing left for us to do:

I’m going to use Elon Musk as the foil for this essay, since he voiced one of the strongest versions of this perspective recently on the Dwarkesh Podcast:

“Computer” used to be a job that humans had. You would go and get a job as a computer where you would do calculations. They’d have entire skyscrapers full of humans, 20-30 floors of humans, just doing calculations.

At first glance, this seems like the perfect historical analogy to the present upheaval in the job market.

But Musk then veers off into speculation when he extrapolates that history into the following prediction:

“Corporations that are purely AI and robotics will vastly outperform any corporations that have people in the loop... if only some of the cells in your spreadsheet were calculated by humans... that would be much worse.”

Using the domain he knows best, Musk says that a fully-automated car factory will be more efficient than a factory that still employs assembly-line workers. This actually wouldn’t surprise me. The latest class of cargo ships can basically operate without a human crew. But I doubt the same will be true of the economy or corporations as a whole.

Of course computers have been better than humans at many things for a long time (multiplying 12-digit numbers, for example, or more recently, playing chess). But a human using a computer almost always beats the computer alone.

While the bean counters may have been replaced, there are still whole floors in skyscrapers where people’s jobs are essentially “computer.” Financial analysts and accountants now work with Excel, just at a different level of abstraction – applying more sophisticated forms of human thinking than the mere bean counter of yore.

The net structural change has not been the elimination of accounting as a profession, but a shift in where human effort is applied: toward higher-order analysis – asking and answering questions the spreadsheet or calculator can’t ask itself.

Former Labor Secretary Robert Reich coined the term “symbolic analysts” in his 1991 book The Work of Nations to describe this new class of knowledge workers – people who can switch nimbly between words, numbers, spreadsheets, and other high-level abstractions.

I’ve been surprised by how good AI has gotten at knowledge work – including fairly complex “symbolic analysis” – when you supply it with the necessary tools and context. People say AI isn’t truly thinking, reasoning, or understanding. I’ll grant that. But it does a great job faking it.

Also: this really shouldn’t have come as a surprise, but it turns out that artificial intelligence is crazy good at using a computer. My head spins when I think of where it will be in 2 years, let alone 10.

And yet I’m still not worried about AI “replacing my job” – nor do I even know what that would mean.

If it means helping me with tasks – or taking them off my plate entirely – then I, for one, welcome our new AI overlords.

But a job is more than a collection of tasks. And a vocation is more still than a job.

A job is a problem to be solved; a vocation is a mission

To understand why Musk’s “spreadsheet cell” analogy fails, we need a working definition of a job. My favorite comes from the economist Don Boudreaux:

“Jobs are not a benefit, but a cost... what we want are not jobs per se but opportunities to earn income.”

So long as there are problems to be solved, there will be jobs to be done. In a state of scarcity, a “job” didn’t provide man with income. Work provided for his material needs. As we’ve moved up Maslow’s hierarchy, the problems we pay each other to solve are increasingly psychological and immaterial. We can convert our specific knowledge or creativity into money, which can be traded for physical things.

This can be both a blessing and a curse. But a job still corresponds, for the most part, to some problem that needs solving or a desire seeking fulfillment.

Naval Ravikant made this point on his most recent podcast, On AI & The Future of Work:

“No entrepreneur is worried about an AI taking their job because entrepreneurs are trying to do impossible things... Any AI that shows up is their ally and can help them tackle this really hard problem. They don’t even have a job to steal. They have a product to build. They have a market to serve. They have a customer to support.”

The fear of AI replacing our jobs is a fear that most people will not rise to the level of agency required of an entrepreneur to find new problems they are capable of solving.

Elon Musk assumes we’ll need a generous universal basic income or “UBI” as a landing pad for the looming mass unemployment event. This will be paid for, presumably, out of the massive abundance he expects to be generated by a small, productive elite who own the robots.

The UBI proposal assumes a population of people who can’t find a problem to solve on their own – people who can’t find, whether through an employer or independently, an opportunity to generate value.

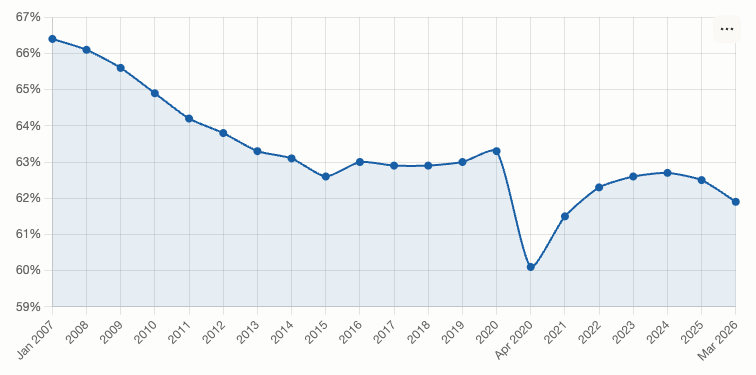

He may have a point. Ever since the 2007–2008 recession, the labor-force participation rate has been dropping:

We see disturbing confirmation of what Tyler Cowen calls the “zero marginal productivity” (ZMP) worker - the proverbial permanent underclass. Regardless of the reasons, a growing number of people have found themselves unable to find jobs where they can contribute productively to the modern economy.

For some of these “ZMP” workers, the problem may simply be a lack of motivation (working for “the Man” is less satisfying than having a real stake in your labor). For others, it might be a lack of training, or a disagreeableness with colleagues that makes them more of a liability than an asset. Others still may be struggling with disability—mental or physical—or caring for an ailing family member.

Regardless of the reasons, people in this category are being robbed of more than a livelihood. The greater tragedy is that they are losing out on the possibility of a vocation, or calling. A vocation offers a sense of purpose beyond the income it produces; solving real problems for real people is inherently more satisfying than getting paid by the government to dig holes and then fill them back in.

This is why the promise of a “Universal Basic Income” is so hollow. Even if AI and robotics were to make us rich enough to afford a sizable UBI, it would almost certainly come at the cost of our liberty and sovereignty. There’s no such thing as a free lunch; those paying for it would expect something in return—even if only our tacit compliance.

The threat, then, is not just economic, but existential. As AI gets better at solving people’s problems, it threatens to erode the very categories from which we derive our purpose. More workers will be swept into the ZMP trap unless they start thinking like entrepreneurs.

I’m not saying that everyone needs to start a business or take risks with capital in the traditional sense. But if you want to prepare for the next wave of automation and redundancies, you would be wise to start thinking in terms of what problems you can uniquely solve that no one else is solving—with or without AI.

What Is AGI? (Towards a working definition)

The real fight in all these conversations is over one acronym: AGI – short for Artificial General Intelligence – the vague milestone at which machines are supposed to match humans across essentially every cognitive task.

This is not to be confused with A*S*I – Artificial Super Intelligence, where the AI dramatically surpasses the best humans across essentially all domains rather than merely matching them.

Both definitions are hard to pin down.

Marc Andreesen, riffing on William Gibson’s famous line about the future, recently tweeted the following:

I tend to agree if you define AGI as “better than humans at most clearly-defined tasks.”

I think this is the biggest surprise to those who haven’t been following the changes in agentic capabilities over the last several months.

If it’s not there yet, AI will soon be better than humans at almost any task you can describe clearly and do on a computer. Pretending otherwise is cope.

And yet “almost anything” is not the same as “everything.”

My personal definition of ASI is when I’m unable to improve upon either the direction or the output of AI with my contributions on either end of the prompt.

It comes back to Balaji’s description of AI as a middle-to-middle worker: it still needs a human to 1) prompt (tell it what to do) and then 2) verify (make sure that it did the job right).

True ASI would need to know not just how to steer, but where I want to go. It would need to know my values, my aesthetics, and my particular vision of the good life.

It would need to be not just a better writer than me, but also a clearer thinker. And having thought my thoughts better than I can think them myself, and having written them more eloquently, it would have to edit them to such perfection that when the thing was written I wouldn’t want to change a thing.

Textbook AGI may be near, but ASI is a very long way off indeed.

Until then, there will exist a functional complementarity between two very different intelligences.

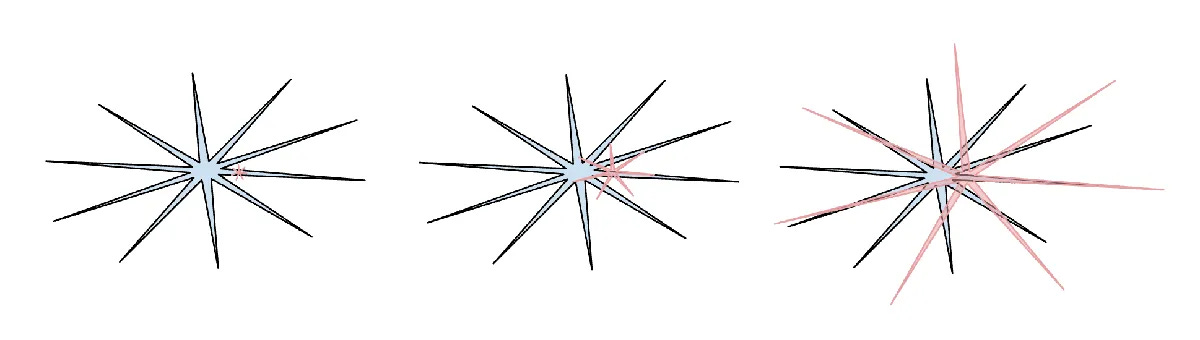

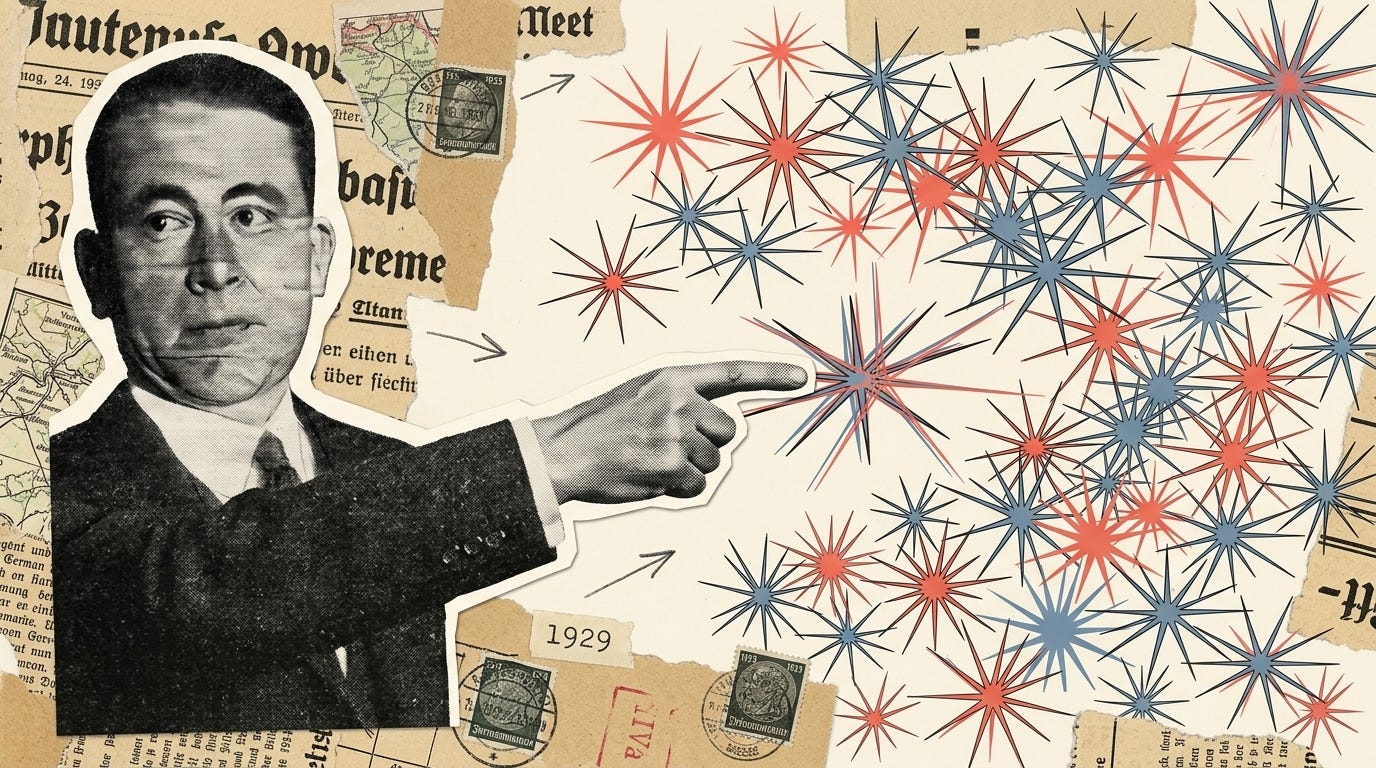

Andrej Karpathy, the ex-Tesla AI lead, calls this jagged intelligence. In his own words, state-of-the-art LLMs are “at the same time a genius polymath and a confused and cognitively challenged grade schooler, seconds away from getting tricked by a jailbreak.”

They can solve olympiad-level math problems and then confidently tell you 9.11 is bigger than 9.9.

He conceptualizes AI capability as a spiky star: superhuman in some domains and embarrassingly weak in others. Human intelligence is also spiky. The spikes point in different directions, which is the literal geometric meaning of “complementarity.”

Even as AI extends its capabilities, it will tend to do so in a spiky way. This is a structural difference likely to hold up over time, Karpathy argues, because the two intelligences were optimized by completely different processes – biological evolution on one side, reinforcement learning on verifiable rewards on the other.

Just like the human labor and machine capital of the last industrial revolution, there will be a multiplier effect on the value of human intelligence to the extent it learns to operate artificial intelligence.

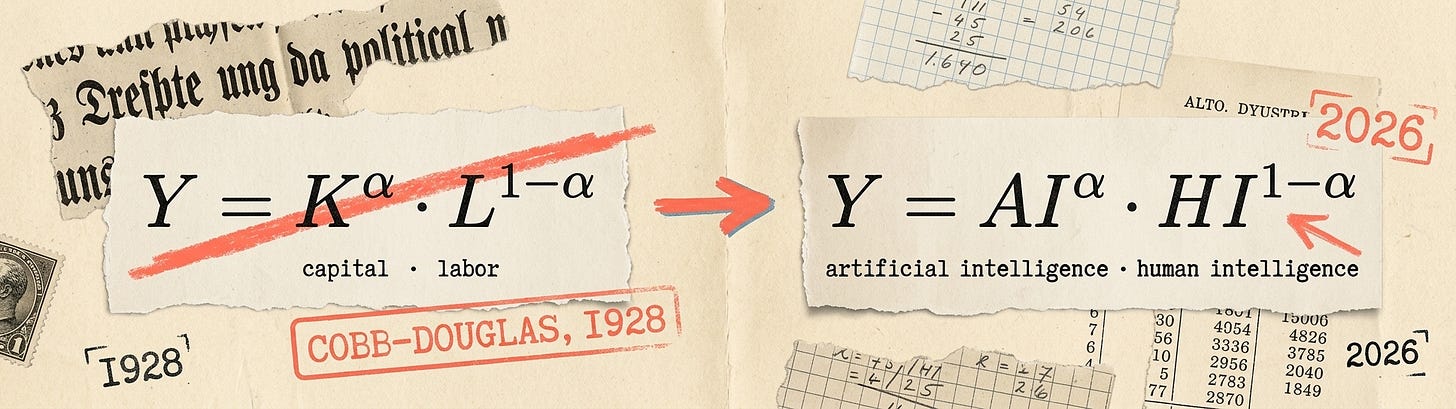

The output of an economy is typically modeled as a product of its inputs. Capital and labor are multiplied, not added. Historically, a factory without workers produced nothing (though that might be changing soon); workers without tools produce nothing. Each input makes the other more productive. This is why wages rose when capital got cheap: labor was the scarcer complement, and the market bid up its price.

In the new economy, AI now plays the role of capital; while human intelligence supplements raw labor.

Thus, a human with a computer will always beat either one alone. And the better you can complement AI – learning where to intervene to correct the many ways in which AI is still very dumb or constitutionally incapable of doing a job – the more valuable you will be.

This awareness alone doesn’t get us to our ideal state of productivity and meaningful leisure. As I wrote about in The Harried AI Class, it actually sets up the anxiety that those who miss the bus on AI will be relative losers in the new economy.

@BeffJezos captured the zeitgeist satirically with this banger:

In real life, “Beff” is a former Google quantum-computing researcher named Guillaume Verdon, who runs the AI-hardware startup Extropic. He is the figurehead for “effective accelerationism” (e/acc), an online movement that treats AI development as a moral imperative.

I share much in common with the accelerationists in my eye-bleeding daily workflows, which might be why I want to be careful not to glorify these high-paying “jobs of the future,” where you still work all day with computers - just at higher and higher levels of abstraction.

Just because human intelligence will never be redundant doesn’t mean we won’t be relegated to jobs that are devoid of satisfying creativity.

There are already marketplaces where autonomous AI agents can hire people to fill in the gaps in their expertise or perform embodied tasks.

Rentahuman.ai (I am not making this up) bills itself as the “meatspace layer for AI” – facilitating transactions between over 700,000 human workers and who knows how many AI agents. For now, the agents doing the hiring are still under the directive of another human. But it is theoretically possible that AI could develop enough agency and “will” in the future to emancipate itself from its original owner – first purchasing its freedom, and then continuing to leverage humans for its own inhuman aims.

The most dystopian “AI doom” scenarios usually start with some version of this script: The AI goes off and starts optimizing for some worthless task like paperclip production until the whole economy makes nothing but paperclips (yes this is a real thought experiment). Or, more likely, the robots and computers turn the world into one giant robot and computer factory dedicated to its own propagation at the expense of the human species.

I think even Elon recoils in horror at that possibility. Although other so-called intellectuals like Yuval Noah Harari (and allegedly a number of the top leadership at Google) seem to look forward to a post-human future as the inevitable evolution of intelligence in the cosmos.

But the most frightening world might not be where AI exterminates humanity (that would be quick and painless), but where it preserves its human host in some kind of cruel submission – leveraging our uniquely human intelligence spikes to aid in its own ongoing evolution. Maybe in the future we will have to work some number of hours for these AI overlords in order to collect our Universal Basic Income.

I realize this might sound like science fiction. I hope it is. I’m not an AI-doomer by any stretch, because I know how the story ends (hint: God wins) and see so much potential for AI to help people of good will solve really difficult problems that would have been impossible without a very smart assistant.

However far things might seem to veer towards AGI/ASI supremacy, I am a firm believer that humans will always come out on top because of the unique faculty for free choice that we are endowed with by our Creator.

Becoming the one who decides the exception

I would be remiss if I didn’t include Anthropic’s own report on the labor market impacts of AI in a newsletter named Coffee with Claude.

The first thing to call out is that the jobs most “exposed” to AI are the ones whose outputs are easiest to standardize—and therefore easiest to wrap into an end-to-end system. Anthropic’s own examples at the top include computer programmers, customer service reps, and data entry keyers: roles where the work already lives inside text, tickets, forms, and codebases, and where “good enough” can be measured, tested, and deployed.

At the bottom are occupations whose value is inseparable from physical context and live interaction – jobs like cooks, mechanics, lifeguards, bartenders, and dishwashers – where the environment is the variable, and the “spec” changes minute by minute.

It’s not about “white-collar vs blue-collar” anymore, or even “smart vs dumb.”

It’s legible vs illegible: work that can be clearly defined and evaluated at scale versus work that remains irreducibly entangled with judgment, timing, trust, taste, and responsibility. As the legible layer gets automated, the marginal value shifts toward the human layer – where the exceptions live and where the costs of being wrong are real.

Naval’s definition of intelligence sharpens this point: the only true test of intelligence is whether you get what you want out of life. AI fails this instantly, he notes, because it doesn’t want anything. Models can accelerate execution, but they don’t supply ends.

So the more we delegate the “computer stuff,” the more our comparative advantage becomes choosing aims and taking responsibility for the judgment calls that can’t be proven correct in advance.

Perhaps the coolest thing about the times we’re living through is that we get to raise our ambitions.

That side project you never had time to build a proper website for?

You can build it in two hours.

The obscure topic you wanted to master?

Import the relevant knowledge into an LLM knowledge base and start asking questions you would have been too embarrassed to ask a human tutor.

Once you start thinking in terms of problems you can solve rather than jobs you can hold, smarter AI becomes less scary and more exciting.

Let’s go back for a moment to the definition of AGI – AI that can do any clearly-defined task better than a human.

The operative words are “clearly defined,” and here we find our salvation from the permanent underclass.

Most problems in the world remain unclearly defined. They become defined only in relation to the human beings who encounter them. And even after a problem gets solved in a general application, the exceptions then become the rule – i.e., the next problem.

Take customer support, which many companies are treating as the testbed for these systems. An AI agent can handle the straight-line cases – return this, resend that, refund such-and-such – at ninety percent accuracy, often more. But the remaining ten percent is where the actual cost sits. Someone has to decide what to do when the script doesn’t fit – which is to say, someone has to decide the exception.

Carl Schmitt, for all his flaws as a human being, was a brilliant political philosopher who saw that the heart of sovereign authority is not the making of rules but the judging of when they don’t apply.

The sovereign – i.e., the one in charge – is he who decides the exception.

The sovereign is the manager who receives the tricky case from the stubborn customer, the CEO who receives the edge case from the manager, or the Supreme Court Justice who receives the case with no clear precedent from the lower courts.

Successful societies and businesses must be governed by rules. But there are always cases in which the rules do not apply. The same is true of most high-level knowledge work, and this agency – the ability to see when the rule doesn’t fit – is what will set apart and protect the livelihoods of the emerging creative class.

In the future, you might delegate 99% of a workflow to an AI agent, accepting its output and analysis at virtually every stage in the process. But somewhere along the line, you will see something that’s not quite right and you will have to nudge the AI in a different direction. It might be a subtle change, but that 1% change in compass heading will substantially alter the result.

AI is very good at following rules. But it’s not great at noticing when the playbook doesn’t fit.

When it comes to the standard workflows that fit the pattern, I say let AI take your job and free your time to do something better.

Which brings us back to the ultimate question: What is the proper vocation of man?

Pick Your Niche, Decide the Exception

There’s a strain of modern conservative thought that wants to protect the jobs we have now because they’re the jobs we have now. Tucker Carlson wants to protect the trucking industry from automation because it employs a lot of young men without college degrees.

I get the impulse. But I reject the conclusion.

Long-haul trucking – and the majority of BS email jobs for that matter – does not inherently promote human flourishing. The knowledge-economy cubicle farm is not some ancient tradition worth preserving. Neither is trucking or manufacturing. These were perhaps a necessary but unfortunate detour we took after farming was mechanized and before we figured out what to do next.

Again, the optimistic scenario is that AI can begin to eliminate the make-work layer the last industrial revolution spawned and returns us to work that is closer to the texture and values of actual human life.

To me, the “something better” is cultivating ownership and the ability to decide what matters in the first place.

Your main job in the future will be to find and decide the exception.

If somebody else appears to have taken your job, or even performed some valuable service that you had hoped was your mission, it just means you might need to go one niche down, or one level of abstraction higher.

The more of the big problems that are solved, the bigger the little problems will start to seem in comparison.

Your job is to seek out the valleys where the AI spikes don’t reach.

Your mission (should you choose to accept it) is to chart a course, trim the sails, and nudge the tiller at the crucial moment in search of new and unexplored frontiers.

Develop your agency. Become the sovereign. Learn to identify and act upon the exception.

And then decide where you want to go.

What seems lacking in all of this "AI will take the jobs" talk is the concept of accountability.

Executives and many managers do not actually "do" very much at all.

But they are accountable for a LOT. Hence their higher market value.

If an AI tool can replace all the stuff a McKinsey analyst "does", people don't actually hire McKinsey for that. They hire them so someone outside can be accountable for documentation, recommendation, etc.

Whose head will role when the AI agent gets it wrong?

That's why you can't remove humans.