The biological and spiritual toll of vibecoding

A 10x developer admits AI makes him tired and the rest of the internet follows suit.

Happy Saturday!

It’s been a busy week in the AI world. So busy that it feels hard to keep up while still getting all my work done.

My way of coping has been to treat keeping up as a kind of work, and spending my sharpest early morning hours attempting my best writing and thinking — sharing with you (my dear reader) — the fruit of that labor.

My hope is that by contextualizing the news about AI, and processing it in a practical way, I’ll find my way to a wiser relationship with AI in the rest of my work. Unlike the newsletters that attempt to breathlessly report on every daily development, I’m holding off on publishing until the end of the week – looking back to see what still seems important.

If you’re feeling anxious about staying current, my overriding advice is: don’t. You’re going to be fine even if you ignore AI altogether and focus on your humanity. But if you want to stay apprised of the bleeding edge (without your eyes bleeding from scrolling X all day), keep reading.

— Charlie

P.S. It means a lot to me to know who’s following along, since this is still an experiment in a new subject. Do me a favor- tell me in the comments which name you prefer:

Coffee with Claude (lofi, slightly caffeinated but chill vibes)

Café Context (More generic, no trademarks)

And be sure to share this is you find it valuable.

Alright let’s get into it.

DEEP DIVE: Simon Says

Last week I published a piece called The Harried AI Class, explaining why the most savvy AI users are working more, not less. The tl;dr is that if these tools don’t allow us to spend more time doing what we enjoy – at least in the long run – we should throw them in the sea.

The next day, Lenny Rachitsky made much the same point on his podcast:

“AI is supposed to make us more productive. It’s supposed to give us more time off. It feels like the people that are most AI-pilled are working harder than they’ve ever worked.”

His guest was Simon Willison — co-creator of Django (the web framework that powers Instagram, Pinterest, Spotify, and thousands of other platforms), prolific blogger, and one of the most enthusiastically pro-AI voices in the whole community. He’s known to test every new AI model by asking it to draw an SVG image of a pelican riding a bicycle, which turns out to be a surprisingly good benchmark.

In response to Lenny, he confided:

“I’m finding that using coding agents well is taking every inch of my 25 years of experience as a software engineer and it is mentally exhausting. I can fire up like four agents in parallel and have them work on four different problems and by like 11 a.m. I am wiped out for the day. Because there is a limit on human cognition in how much — even if you’re not reviewing everything they’re doing — just how much you can hold in your head at one time.”

That clip racked up 1.8M views and 600 comments, and I suspect that the reason it went viral is because a lot of people are feeling this beneath the surface. The AI conversation on X right now is mostly people beating their chest, saying “Look how awesome I am. I spent $60,000 in tokens,” as if that in itself is accomplishment.

So when somebody of Simon’s stature comes out and says, “this is exhausting,” the floodgates open, because it turns out we’re all kind of exhausted, and it becomes safe to say so.

He also makes a key point about FOMO:

“I’ve talked to a lot of people who are losing sleep because they’re like, ‘My agents could be doing work for me. I’m just going to stay up an extra half hour.’ And they’re waking up at 4 in the morning. That’s obviously unsustainable. There’s an element of sort of gambling and addiction to how we’re using some of these tools.”

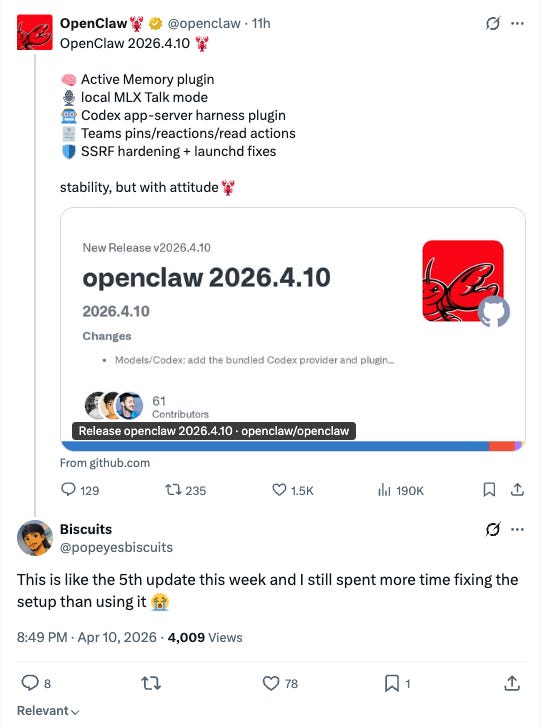

This frenetic anxiety mixed with manic excitement (often labeled “AI psychosis”) is especially apparent in the “OpenClaw” community. For the uninitiated, OpenClaw is an open-source AI agent platform — essentially a portal for controlling autonomous agents from your phone, with cron jobs for scheduling, custom memory systems, and a skill marketplace — giving AI the tools, hands, and brain to work longer and more autonomously on a wide range of tasks.

OpenClaw and its creator Pete Steinberger were recently subsumed into OpenAI, and Jensen Huang, the CEO of NVIDIA, called for every company to have an “OpenClaw strategy” at GTC 2026.

This underscores the widespread temptation to spend all of our time optimizing these agents and handing them root access to our life – our email, our bank accounts, maybe even our kitchen appliances – on the assumption that they will eventually save us time. Never mind that a number of OpenClaw’s most dedicated users admit that they spend more time trying to maintain their systems than they are saving in real jobs done.

At the same time, we have to acknowledge that at the root of this psychosis is a very real quantum leap in the capabilities of software engineers like Willison who are leaning into the new coding paradigm. You really can get a lot more done, and in less time... albeit at a cost.

When our thoughts run ahead of our hands

The problems, I think, arise when our thoughts are able to run ahead of our hands. This sets the stage for a special kind of tiredness, and a special kind of mess.

Simon noticed this in his own work:

Simon: Sometimes I’ll have an idea for a piece of software, and I can knock it out in like an hour and get to a point where it’s got documentation and tests and all of those things, and it looks like the kind of software I previously spent several weeks on. And yet I don’t believe in it. I got to rush through all of those things. Most importantly, I haven’t used it yet.

It used to be if you looked at software and it had high quality tests and documentation, everything, it meant it was good. And now that signal is gone.

Lenny: It’s almost like we need a proof of work.

Simon: Proof of usage. Exactly.

The thing you built looks 95% finished, but that last 5% is 90% of the total effort.

I don’t know this from experience when it comes to software, but I know it’s true for writing. When you rely too much on AI, you end up with a whole lot of volume that’s not quite there. You didn’t quite tie up the thought. You didn’t quite do enough of the thinking to close the loop.

Tiago Forte — the productivity guru behind the PARA notetaking method and Building a Second Brain — expressed this eloquently:

“I went all in on AI early. And after a while, something felt off. I’d lean on it to handle the hard thinking for me. Draft the strategy. Decide the angle. Structure the argument. It was faster, sure. But when I read the output back, I felt nothing. No relationship to the words on the page. My name on something I didn’t really write. Worse, my curiosity started to disappear. I could get answers so fast that questions stopped forming. I started losing touch with my own point of view on topics I’ve spent a decade thinking about.”

He calls this cognitive debt:

“The debt of not having done the thinking to arrive at a conclusion you believe in. The debt of not understanding the decisions behind the plan, and therefore not trusting it. The debt of generating a polished document you don’t care enough about to do anything with.”

The more you try to smooth out a misshapen draft with AI, the more it resembles Michael Jackson after so many rounds of plastic surgery. The punctuation may be impeccable (so impeccable that you start swapping em dashes for more human-looking en dashes). But if you’re trying to replace the actual thinking that goes into a great product or a great essay, you’re going to produce slop.

When you read an essay or social media post that has clearly been assisted by ChatGPT — or even just edited heavily from a transcript — there’s always this question mark. Have they done the thinking? Have they even read the whole thing they ostensibly “wrote”? Or did their thoughts run ahead of their hands and they just shipped it?

To extend ourselves is to dilute ourselves

I believe the late media theorist Marshall McLuhan is the prophet for the AI age.

In his 1964 book Understanding Media: The Extensions of Man, he observed that every extension of ourselves — through technology or media — is simultaneously an amputation. The wheel extends the foot but amputates the walker’s intimate relationship with the ground.

He drew on the Greek myth of Narcissus — a name that comes from the word narcosis, meaning numbness:

“This extension of himself by mirror numbed his perceptions until he became the servomechanism1 of his own extended or repeated image.”

A copy of a copy of a copy.

Sherry Turkle updated McLuhan for the social media era.

“As we distribute ourselves, we may abandon ourselves,” she wrote in Alone Together.

This quote always makes me think of Bilbo Baggins, describing the toll the Ring of Power has taken on his substance:

“I feel all thin, sort of stretched, if you know what I mean: like butter that has been scraped over too much bread.”

The ring gave Bilbo superpowers but spread his substance thin. AI also promises us superpowers but spreads our attention, our intention, and our actual thinking across too much surface area to execute on any of them.

I think this is what Simon is experiencing. It’s a lessening of our ontological weight — our very being. And unless we take the time to coalesce and reintegrate our identities — daily! — we are running the risk of ending up becoming a kind of Gollum, still clinging to the ring but hating what we’ve become.

It’s worth mentioning here that the dominant new mode for vibe coding is voice dictation over traditional typing, using tools like Wispr Flow. Dictation increases the speed and reduces the activation energy for getting our thoughts down on paper, allowing those thoughts to get even further ahead of our hands.[2]

My stats tell me I’ve dictated roughly 1.5 million words with Wispr Flow in the past few months.

But what is all that wasted breath?

“I say unto you, That every idle word that men shall speak, they shall give account thereof in the day of judgment.” — Matthew 12:36 (KJV)

I don’t know how many of those million plus words were idle, per se, but I’m pretty sure it’s not zero.

Putting aside the loftier questions of sin and judgment, there’s a more mundane adverse physical side effect of the dictation paradigm.

When we talk so much (when we blow hard, so to speak) we are exhaling more CO2 than the body can comfortably produce. We incur an actual CO2 debt that the body has to work harder to replenish. Ray Peat has made the underappreciated argument that CO2 is a critical regulator of oxygen delivery to tissues and cellular energy production. When you exhale CO2 faster than your metabolism generates it, you shift into a mildly alkalotic state. Blood vessels constrict, oxygen delivery to the brain drops, and you get that foggy, depleted feeling.

Throw in the well documented phenomenon of “screen apnea” — the unconscious tendency to hold your breath or breathe shallowly while staring at screens — and you have a recipe for a new kind of exhaustion.

Lastly, the eyes become hypertrophied; hyperfocused on the little symbols on the screen. We lose the more expansive view and peripheral awareness. Our bodies are amputated.

The uncertainty factor

There’s one more reason worth naming, in order to combat the root causes rather than just addressing the symptoms.

Simon says:

“I’ve got 25 years of experience in how long it takes to build something and that’s all completely gone. It doesn’t work anymore. I can look at a problem and say, ‘This is going to take two weeks, it’s not worth it.’ And now it’s like, yeah, but maybe it’s going to take 20 minutes.”

Dealing with uncertainty is tiring. When our forecasting abilities fail, we’re more likely to fall into the trap of stretching ourselves too thin, and taking on more projects than we can bring to fruitful completion.

Add to that the fact that much of what you’re building right now will likely be rendered redundant by the next generation of models or technologies. You might spend 5 hours optimizing your blog archive for SEO (it would have taken 50 hours a year ago). But in six months you find that SEO has become a useless channel now that anyone can do the same optimization in 5 minutes. You could build a whole software suite that would have been best-in-class five years ago and now it’s going to be replaced by some new interface that just solves the problem in a fraction of the time.

The conclusion I draw is that it’s more important than ever to trust that you are working on the right things – things that will last. My solution starts with prayer. I pray for the Almighty to convict me if I’m working on the wrong thing, so that I might not be caught unaware. This alleviates the exhausting suspicion of futility and frees me to enjoy the process.

You also have to be willing to throw things away, even if they took a lot of tokens and effort to build.

You should spend your best thinking hours (for me this is the morning, before the kids are up) engaged in real unadulterated thought. Before I even think about starting to orchestrate my agents, I put literal pen to literal paper.

Take a voice note if you must, but use a separate app and don’t send it as a prompt until you’re ready to switch into orchestration mode.

Lastly, accept that you will probably only be able to do really good work for about four hours a day. Work like a lion: Sprint, rest, repeat. Use the rest of the day for outdoor chores that rejuvenate the mind and allow it to relax and go into whatever shape it wants. For me, that’s swimming or some other regenerative movement. Let your mind unspool, without being directed. Let your lungs breathe without speaking.

Simon says it best:

“There’s a sort of personal skill that we have to learn which is finding our new limits.”

IN OTHER NEWS

Anthropic’s Mythos model — The most powerful model they’ve ever built is so good at hacking they won’t release it to the public. It’s been restricted to 40+ security partners under “Project Glasswing” ($100M in usage credits). Full writeup coming soon, after the dust settles.

Karpathy on LLM knowledge bases — Andrej Karpathy’s essay-length tweet on building personal knowledge bases with markdown + Obsidian + LLMs got 30K likes and launched a dozen startups in the replies.

Ronan Farrow on Sam Altman in the New Yorker (paywalled) — “Sam Altman May Control Our Future — Can He Be Trusted?” 18 months of reporting, 100+ interviews, 200+ pages of documents to answer this question. (Short answer: No.)

“Be nice to your AI.” — Anthropic research found that when Claude gets an impossible task, a “desperate” vector activates and it starts cheating with hacky solutions. The fix is to add “it’s ok buddy, don’t worry about the failure” into the prompt and it stops.

Skills are going mainstream. xAI is adding Skills to Grok. Google’s Agent Development Kit uses “skills” as a first-class concept. MSN and GIGAZINE are writing beginner guides. The window to be early is closing.

Semrush: human-written content still wins at the top of Google — A study of 42,000 blog posts found that at position #1 in search results, 80.5% of content was human-written versus just 10% AI-generated. From position 5 down, AI content is competitive — but the top spot still belongs to humans. Full study.

BEST OF X

Mythos might be AGI. TBPN is a podcasting network.

I think I’m somewhere between level 2 and level 3:

It could be worse; I could be this guy:

SUPERPOWERS

Wispr Flow — The tool that makes vibe coding possible, for better and for worse. Use it for thinking out loud, not for replacing the thinking. Or consider the free locally-hosted alternative, Superwhisper. I tried it and it’s fast but not quite as reliable as Wispr Flow. A good starting point if you don’t want to pay yet.

Fieldtheory — CLI for downloading and syncing your X bookmarks locally so your agent can read them.

npm install -g fieldtheory, thenft sync.Garry Tan’s Claude Code skill setup — Y Combinator’s president shared his exact agent workflow, installable with one paste.

Zed — My preferred editor for working with Claude Code on a bunch of files. Bigger learning curve than most, but more stable than the alternatives.

LEARN THIS STUFF

Claude Code for Product Managers (free) + Full Stack PM Mastery (paid) — Carl Velotti has the best guide I’ve found for getting oriented with Claude Code in a way that lets you interact with files, build context, and do knowledge work. The free version is excellent on its own. His new paid course, Full Stack PM Mastery, is the next step up if you want to go deeper.

Nathaniel Solace’s AI course — A newer offering I’m keeping an eye on.

Anthropic Academy — 16 free courses, straight from the horse’s mouth, but I can’t vouch for it. Let me know if you try it out.

FOOTNOTES

[1] The term “Servomechanism” came into vogue in McLuhan’s era alongside the promises of cybernetics and what Richard Barbrook and Andy Cameron would later call the “California Ideology.” If you want to explore this lineage, I highly recommend Adam Curtis’ documentary All Watched Over by Machines of Loving Grace. Both the film and one of Anthropic CEO Dario Amodei’s landmark essays draw their title from the same Richard Brautigan poem:

*I like to think*

*(it has to be!)*

*of a cybernetic ecology*

*where we are free of our labors*

*and joined back to nature,*

*returned to our mammal brothers and sisters,*

*and all watched over*

*by machines of loving grace.*

Make of that what you will.

[2] The trend is toward removing the last bottleneck between thought and execution. Elon Musk has pointed out that even speaking in plain language involves a layer of abstraction — inefficient compared with an AI that could read your thoughts directly at a conceptual level, which is what Neuralink aims to do. But each bottleneck we remove — typing, then speaking, then thinking — also removes a checkpoint where we might have caught ourselves.

Coffee and Context.

I'm team "Coffee with Claude"